Dynamo Db Amazon web services

DynamoDB is an Amazon managed NoSQL database.

The performance characteristics and client behaviour of DynamoDB are very different to traditional data stores (for example, databases). When working with a relational database, performance, gradually decrease as load on the database increases. DynamoDB tables have a configurable read and write capacity, specified as the number of reads / writes per second the table will accept. AWS charges based on the provisioned capacity. Exceed this limit and DynamoDB will reject the read / write.

Our process is a batch job which will write approximately 10 million records to a DynamoDB table.

Ideally the job will complete in under an hour, so while the job executes, we will configure the DynamoDB table with a write capacity of 3000. (10,000,000 records / 60 minutes / 60 seconds = 2777 rounded up).

DynamoDB is the NoSQL option at AWS and the basic unit are tables that store items. DynamoDB Streams is a feature you can turn on to produce all changes to items as a stream in real time as the changes happen. When you turn on the feature, you choose what is written to the stream:

- Keys only—only the key attributes of the modified item.

- New image—the entire item, as it appears after it was modified.

- Old image—the entire item, as it appeared before it was modified.

- New and old images—both the new and the old images of the item

Dynamo Db Streams is an optional feature that captures data modification events in Dynamo Db tables. The data about these events appear in the stream in near real time, and in the order that the events occurred. Each event is represented by a stream record. If you enable a stream on a table, Dynamo Db Streams writes a stream record whenever one of the following events occurs:

- If a new item is added to the table, the stream captures an image of the entire item, including all of its attributes.

- If an item is updated, the stream captures the “before” and “after” image of any attributes that were modified in the item.

- If an item is deleted from the table, the stream captures an image of the entire item before it was deleted.

Each stream record also contains the name of the table, the event time stamp, and other metadata. Stream records have a lifetime of 24 hours; after that, they are automatically removed from the stream.

A DynamoDB Stream is a continuous pipeline of every modification made to a DynamoDB database. The moment a document is inserted, modified, or removed from the primary database, the DynamoDB Stream emits an event with information about the change, including the old and new versions of the modified document. Every DynamoDB database has the option to enable streams, which can be consumed by libraries provided by Amazon Web Services. While Amazon provides an open source library for using DynamoDB streams to replicate databases across regions, this library needs to be run and maintained on a standalone server. In order to provide cross-region replication without the need to set up self-managed EC2 instances.

DynamoDB Streams enables powerful solutions such as data replication within and across AWS regions, materialized views of data in DynamoDB tables, data analysis using Kinesis materialized views, and much more.

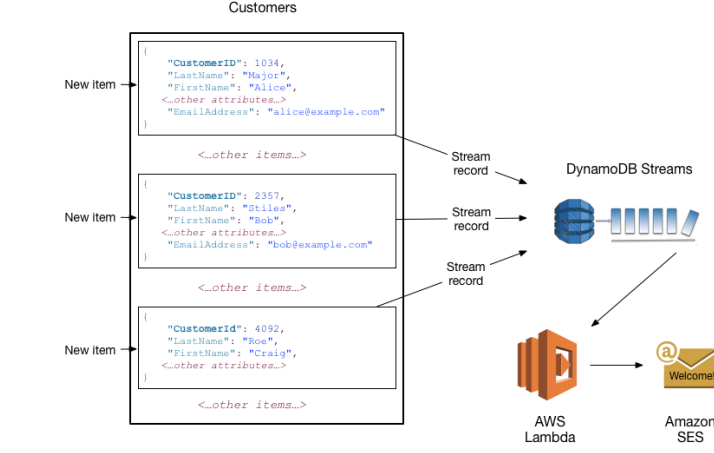

You can use DynamoDB Streams together with AWS Lambda to create a trigger—code that executes automatically whenever an event of interest appears in a stream. For example, consider a Customers table that contains customer information for a company. Suppose that you want to send a “welcome” email to each new customer. You could enable a stream on that table, and then associate the stream with a Lambda function. The Lambda function would execute whenever a new stream record appears, but only process new items added to the Customers table. For any item that has an EmailAddress attribute, the Lambda function would invoke Amazon Simple Email Service (Amazon SES) to send an email to that address.

Note: In this example, the last customer, Craig Roe, will not receive an email because he doesn’t have an EmailAddress.